Model First by Year End: How Two People Run a Company That Feels Like Ten

We decided that by December 2026, every repeatable task in our company would be handled by AI. Two months in, we are already losing track of what we still do manually.

We decided that by December 2026, every repeatable task in our company would be handled by AI. Two months in, we're already losing track of what we still do manually.

There's a moment that keeps happening. I'm in a meeting, someone asks me a question, and before I can pull up the answer, my AI assistant has already found it. Not in a "here's a Google result" way. In a "I know your calendar, your CRM, your last three conversations with this person, and the project status" way.

That moment is what Model First feels like from the inside. And it changes how you think about building a company.

What Model First Actually Means

Model First is a simple idea with uncomfortable implications. Every process in your company gets redesigned with AI as the default operator. Humans stay in the loop only where judgment, relationships, or creativity genuinely require it.

Thomas and I run Product Masterclass together. We train product managers, run one of Europe's largest product conferences, and do consulting work. That's a lot of surface area for two people. Model First is what lets us cover it without burning out or scaling the team.

What My Day Actually Looks Like

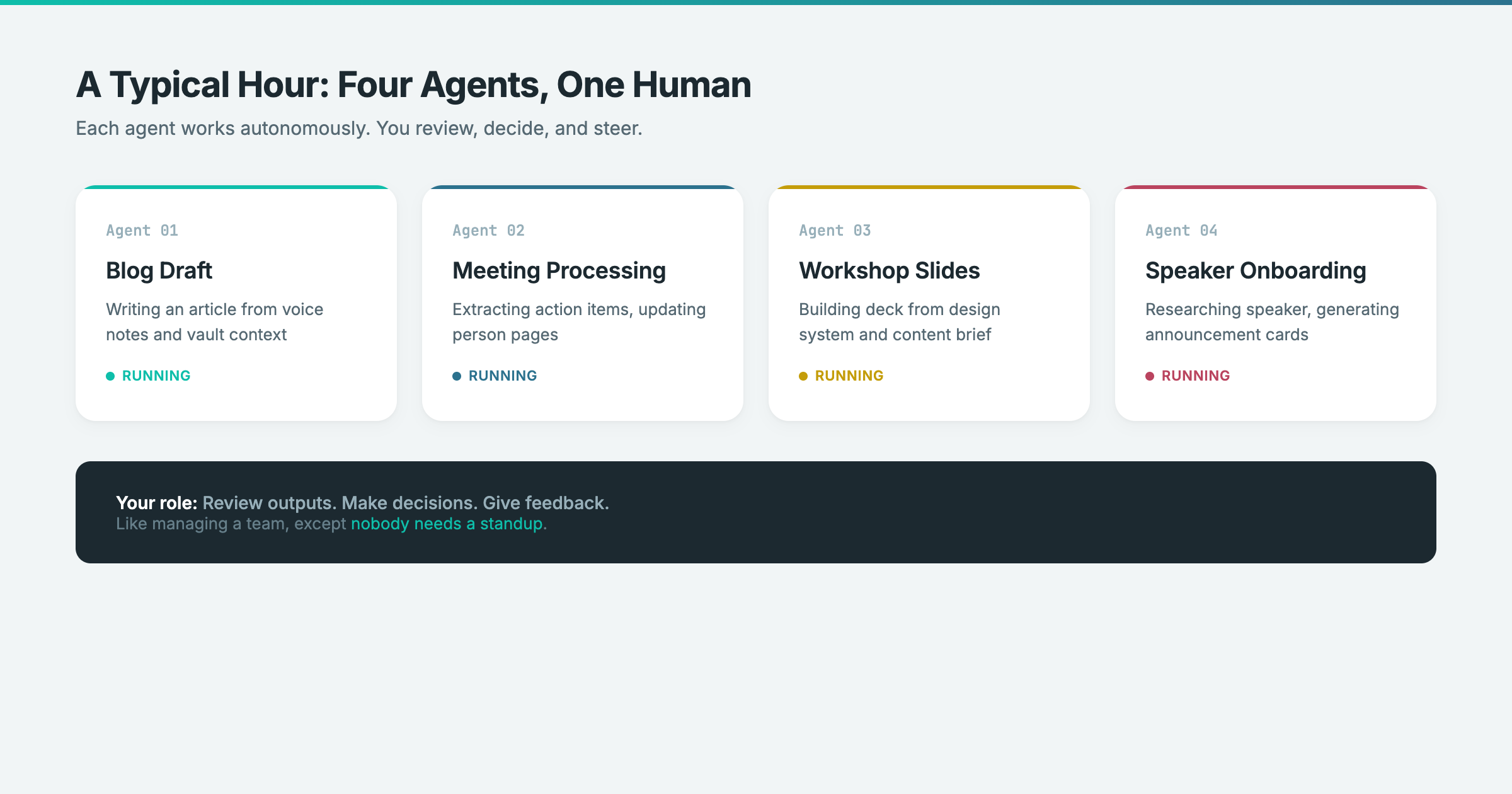

I have three to four AI agents running in parallel most of the time. Each one works on a different task. One is drafting a blog post. Another is processing meeting transcripts and updating person pages. A third is preparing slides for a workshop. I'm reviewing outputs, giving feedback, and making decisions. It feels like managing a small team, except nobody needs a standup.

Here's a concrete example. Last Friday morning, my system flagged: "You have a talk next Thursday and you haven't prepared anything yet. Want to start today?" That happened because the AI knows my calendar, knows I haven't created any files related to that event, and has learned that I tend to procrastinate on talk prep. That's not a reminder I set. That's contextual awareness.

The workshop slides you'd see if you attended my session yesterday? Built in 45 minutes. Not by a designer. By me talking to Claude, referencing our design system, and iterating twice. The AI pulled the structure from our shared knowledge base, applied our brand guidelines, and generated the full deck.

The Stack Is Embarrassingly Simple

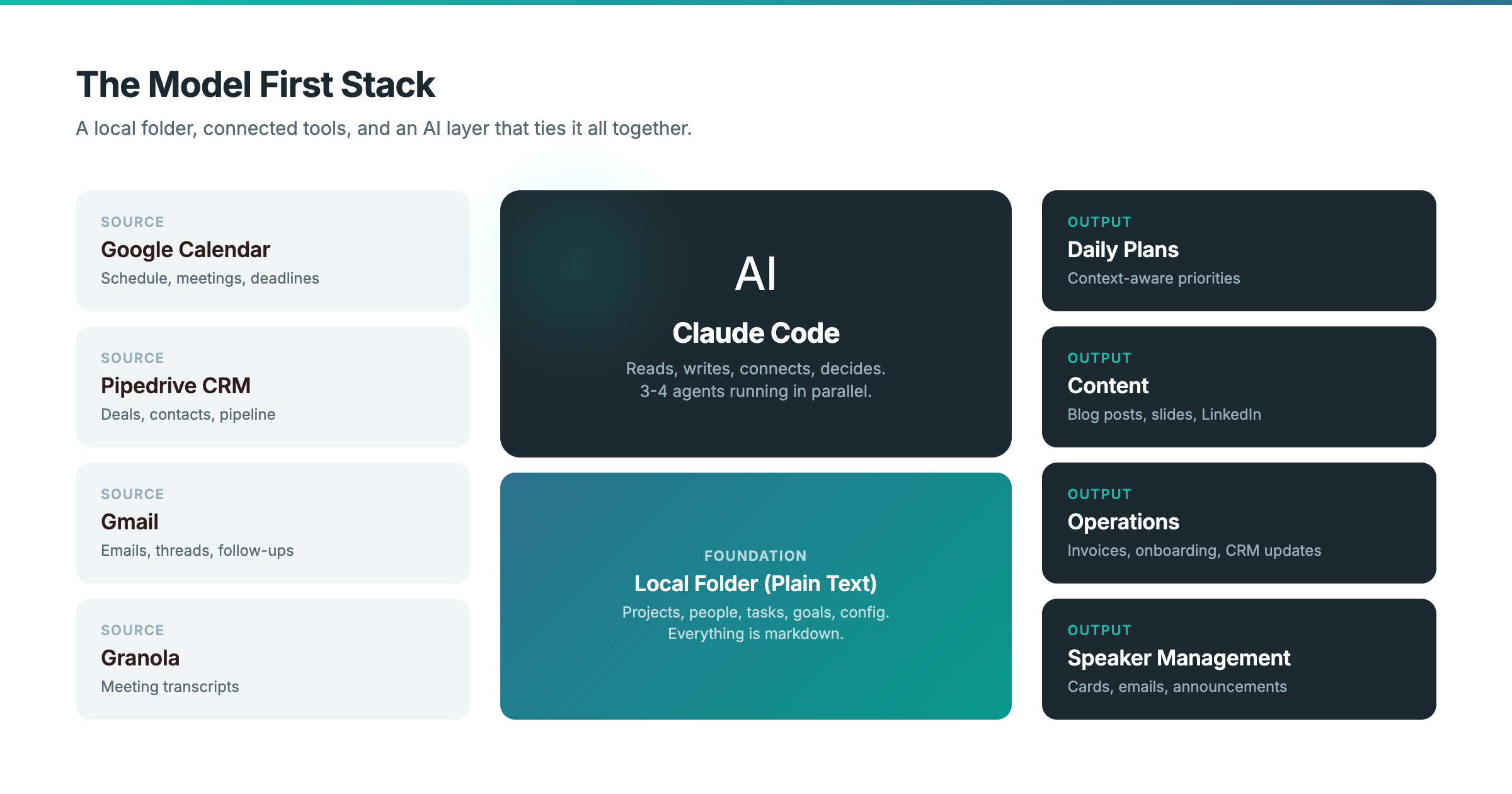

People expect some sophisticated infrastructure. The reality is a folder on my hard drive.

That's it. A local folder with a specific structure. Inside it: an inbox for captured information, project folders, people pages, task lists, quarterly goals, and a system configuration folder. Everything is plain text files, mostly markdown. No database. No cloud platform. No vendor lock-in.

The magic isn't the folder. It's what connects to it. My calendar syncs in. My CRM data flows through. Email is accessible. Meeting transcripts land automatically. Analytics dashboards are queryable. And Claude Code sits on top of all of it, reading and writing to that folder structure like a team member with perfect memory.

The shared folder between Thomas and me contains our brand guidelines, customer information, content drafts, and operational playbooks. When I ask the AI to write a LinkedIn post, it doesn't guess our tone. It reads the guidelines. When I ask it to onboard a new course participant, it pulls the email data, creates the CRM entry, generates the invoice, and presents everything for my review.

The System Builds Itself

This is the part that still surprises me. When I needed speaker announcement cards for the conference, I described what I wanted and it built the rendering pipeline. When I needed CRM data in my daily planning, I described the workflow and it connected the pieces.

I deliberately shifted from builder to reviewer. The old approach was: understand the problem, design the solution, build it, maintain it. The new approach is: describe what you need, review the plan, approve it, let it build. If something breaks, describe the problem and let it fix itself.

That shift felt strange at first. I've been a software engineer for most of my career. Letting go of the "I need to understand every layer" instinct took weeks. The moment it clicked was when I asked the system to connect Gmail and it asked me three clarifying questions, wired everything up, and it just worked. I realized I'd spent years solving problems like that manually, not because I had to, but because I didn't know another way.

The skill requirement flips. You don't need to know how to code. You need to know how to describe what you want clearly, how to evaluate whether a plan makes sense, and when to push back. Those are product management skills. Which is fitting, given what we teach.

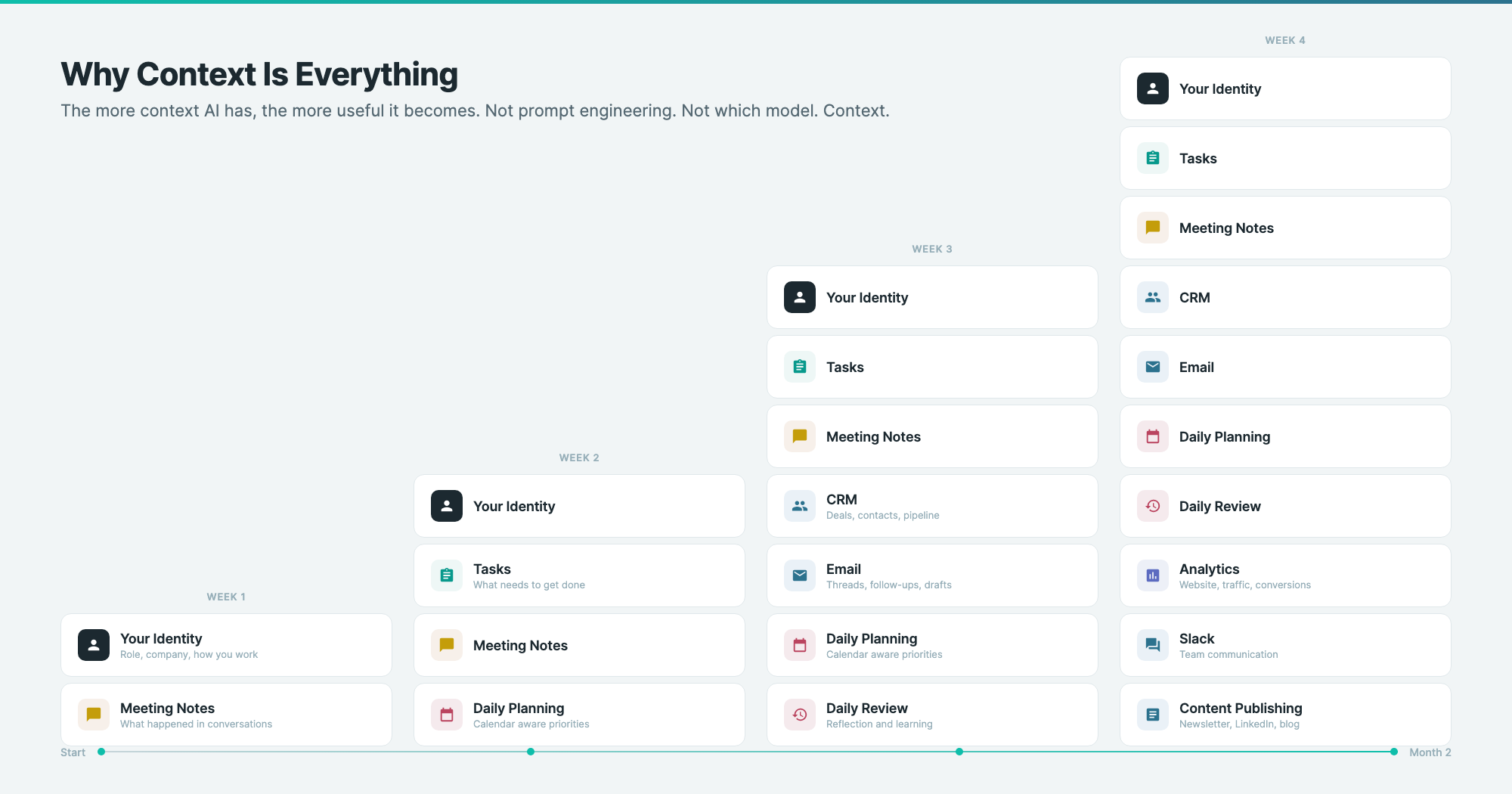

Why Context Is Everything

The single biggest factor in how useful AI becomes is how much context it has. Not prompt engineering. Not which model you use. Context.

I transcribe most of my meetings. Not the audio, just the live transcript. Because if the AI knows what happened in every conversation, it can connect dots I'd miss. "The person you're meeting tomorrow mentioned budget constraints last week. Here's what they said." That's not a feature I configured. It emerges from having enough context.

This is also why the local folder approach matters. Every file I create, every note I take, every plan I make becomes part of the AI's working memory. After two months, the system knows my quarterly goals, my client relationships, my energy patterns, and my tendency to delay important-but-not-urgent work. It uses all of that when it plans my day.

The implication for any company is clear. If your AI tools start from zero every session, you're wasting most of their potential. The real value unlocks when AI has persistent, structured access to your actual business context.

What This Means for Teams

We're two people. But the same principle scales. Imagine a product team where the AI knows your roadmap rationale, your interview transcripts, your technical constraints, and your stakeholder map. Every ticket it writes is grounded in evidence. Every status update references actual progress. Every decision is checked against strategy.

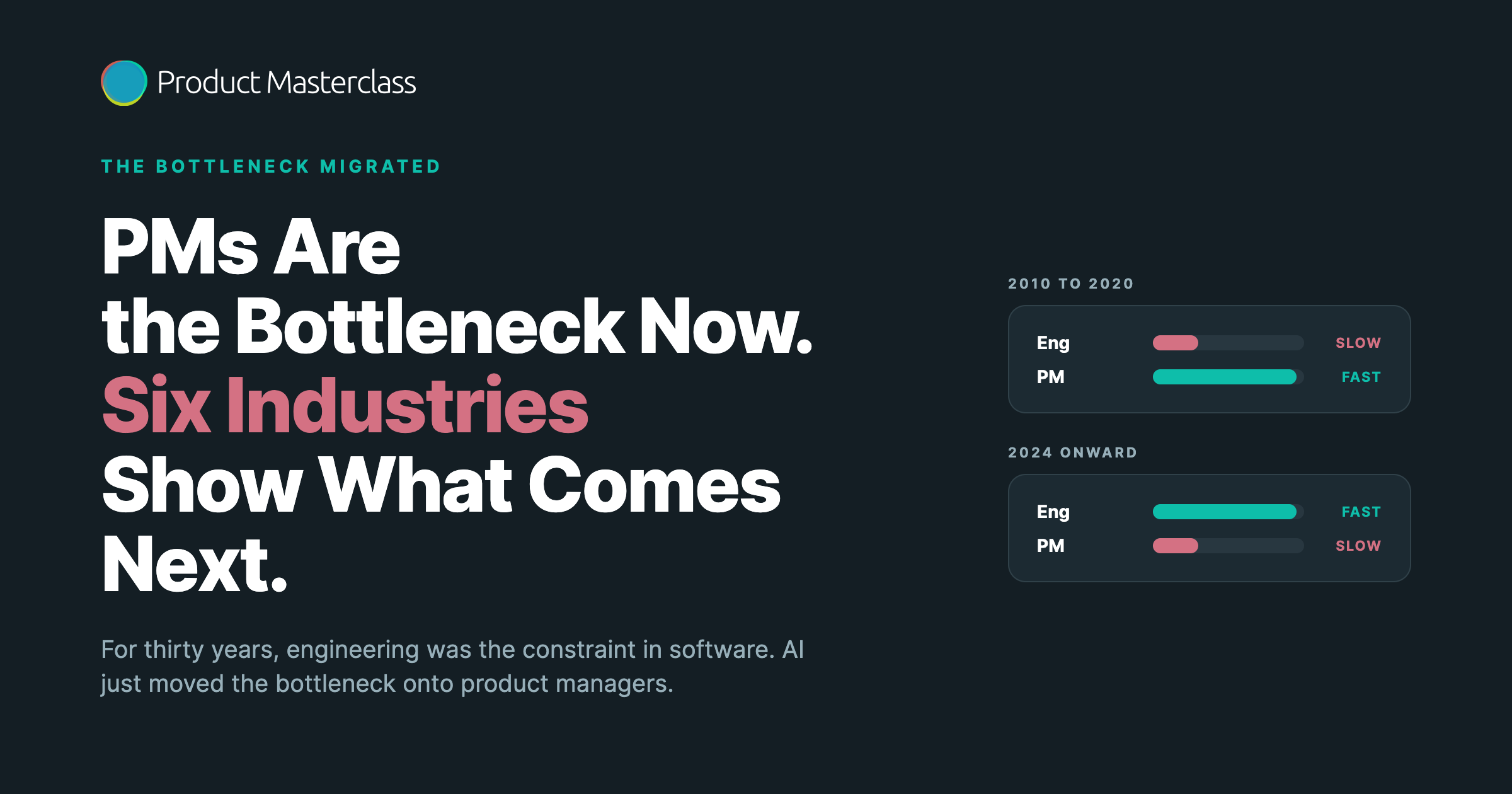

That's not science fiction. That's what happens when you commit to Model First and systematically feed context into the system. The technology is already there. The bottleneck is organizational willingness to restructure how information flows.

The Honest Part

Is it uncomfortable? Yes. I studied computer science. I took machine learning courses in 2011 where my professor said nothing new would happen in the field. Now I'm watching the field make half of what I learned irrelevant, and I'm building a company that proves it.

There are moments where it's borderline creepy. When the system anticipates what I need before I ask. When it connects information from a meeting three weeks ago to a decision I'm making today. When it flags that I'm avoiding a task I should be doing.

But here's what I've learned: leaning into the discomfort is the only option that makes sense. The companies that wait for AI to feel comfortable will find themselves competing against companies where two people do the work of ten. Not because they work harder, but because they've given AI enough context to be genuinely useful.

We're aiming for Model First by December. Two months in, I'm starting to think we'll get there sooner.

Everything in this article is what we practice in Product Masterclass. Every week, participants work with skills, prompts, and structured context to build an AI first workflow suited for product management. If you want to build your own version of this, check out the next cohort.

Discover where product management is heading

Stay up to date with our Product Newsletter and do not miss out on free articles, videos, templates, events on Product Management